This guide on how to write better AI prompts comes from two years of testing what actually moves output quality. The honest verdict: prompt writing in 2026 isn’t about clever wording. It’s about clear specs. AI models are smart enough that long, complicated prompts often hurt more than they help. The 7 rules in this guide are the ones I keep coming back to: start with a clear role, give context upfront, specify the exact format you want, show examples when tone matters, add constraints to block bad output, iterate instead of rewriting from scratch, and save the prompts that work. Each rule includes a real before-and-after example so you can see the change in output quality. By the end you’ll spend less time fighting with AI tools and more time getting work done. None of this requires being a developer or an expert.

Why Most AI Prompts Fail (And Why It’s Not the Model’s Fault)

The most common reason people give up on AI tools is bad output. They ask ChatGPT or Claude something, get a generic response, and decide the AI “just isn’t very smart.” Almost always, the problem isn’t the model. The problem is the prompt.

Prompts that fail share three patterns. They’re too vague (“write about marketing”). They lack context (the model has no idea who the audience is, what tone fits, or what you’ve already tried). They don’t specify the format (so you get a wall of text when you wanted a 5-bullet list).

The shift in 2026 isn’t about learning more “prompt hacks.” It’s about treating prompts like specs you’d hand to a freelancer. A good freelancer with vague instructions will produce vague work. The same is true for AI. To write better AI prompts, you have to think like someone briefing a human, not like someone asking a search engine.

The 7 Rules to Write Better AI Prompts (With Examples)

Each of the 7 rules below is one I’ve used hundreds of times to write better AI prompts. They’re sequenced by impact: the earlier rules make the biggest single difference, the later ones compound your speed over time. Anthropic’s official prompt engineering docs cover the underlying theory if you want to go deeper after these basics.

Rule 1: Start with a Clear Role

Tell the AI who it is before you tell it what to do. This sets the tone, the depth, and the assumptions for everything that follows.

❌ Bad: “Explain TypeScript generics.”

✅ Good: “You are a senior frontend engineer explaining TypeScript generics to a mid-level developer who knows JavaScript well but is new to TypeScript. Use practical examples, not theory.”

The role plus audience combination is the single highest-leverage change you can make to any prompt. It primes the model to write at the right level instead of either oversimplifying or talking over your head.

Rule 2: Give Context Upfront, Don’t Drip-Feed

Most people start with a question, then add context after the AI gets it wrong. That wastes turns and burns through context windows. Front-load everything the model needs to know in the first message.

❌ Bad: “Write a product description.” (then 5 follow-up messages adding details)

✅ Good: “Write a 100-word product description for a $89/month project management SaaS aimed at agency owners with 5–20 employees. The unique value is unlimited client portals included, where competitors charge per client. Tone: confident but not salesy. Avoid the words ‘revolutionary,’ ‘powerful,’ or ‘leverage.'”

Claude in particular performs much better when you give it everything upfront in one message. Our Claude Opus 4.7 review covers why this model is especially good at handling long context-rich prompts.

Rule 3: Specify the Exact Output Format

If you don’t tell the AI what shape the output should take, it picks one for you. Usually a wall of paragraphs. Always specify the format.

❌ Bad: “Compare ChatGPT and Claude.”

✅ Good: “Compare ChatGPT and Claude in a markdown table with these columns: Best for, Pricing, Context window, Coding score, Writing quality. Then below the table, write a 2-sentence verdict on which one to pick for a freelance writer.”

Format specification is what turns AI output from “interesting” into “usable.” It saves you the post-processing work of reformatting everything.

Rule 4: Show Examples When Tone or Style Matters

This is called few-shot prompting. Instead of describing the style you want (“write in a casual tone”), show it. Paste 1–3 examples that match what you’re going for.

❌ Bad: “Write 5 LinkedIn post hooks in my voice.”

✅ Good: “Write 5 LinkedIn post hooks in the style of these 3 of mine: [paste 3 hooks]. Match the rhythm, sentence length, and word choice. Don’t use my exact phrases.”

Few-shot prompting consistently outperforms description-only prompts on tone matching, format accuracy, and stylistic consistency. It’s one of the most underused techniques in beginner prompt writing.

Rule 5: Add Constraints (Tell the AI What NOT to Do)

Constraints are how you eliminate predictable AI-isms. Every model has tics: overusing certain words, ending with a summary nobody asked for, hedging when the prompt asked for a strong opinion. List those tics and tell the model to avoid them.

❌ Bad: “Write a blog intro about productivity.”

✅ Good: “Write a 75-word blog intro about productivity for remote workers. Avoid: starting with ‘In today’s world,’ the words ‘leverage’ or ‘unlock,’ generic motivational openers, and any sentence that sounds like a TED talk.”

Once you list the things you don’t want, the model stops producing them. This rule alone can take output from generic-sounding to genuinely usable.

Rule 6: Iterate, Don’t Rewrite from Scratch

When AI output isn’t quite right, most beginners scrap the whole prompt and start over. Don’t. Iterate from where you are.

❌ Bad: Get bad output → write a brand new prompt → get bad output again → repeat

✅ Good: Get partial output → reply with “this is closer, but make the second paragraph more specific and shorten the third” → get better output → reply again with one more refinement

Three short iterations almost always beat one long perfect prompt. The model has the context of what it already produced, which speeds up convergence on what you actually want.

Rule 7: Save the Prompts That Actually Work

The single biggest waste in time spent learning to write better AI prompts is rewriting prompts you’ve already written before. Build a personal prompt library. Even a Notes app with 10 saved prompts will save you hours per month.

Save prompts for: blog post outlines, email replies in your voice, code review feedback, meeting summaries, social media post hooks, and any task you do more than twice. The first time you write a great prompt, copy it. Re-use, then refine the saved version over time.

This is the fastest skill compound in AI use. The 5th time you reuse a prompt, you’re already ahead of someone writing every prompt from scratch.

Before-and-After: A Real Prompt Comparison

Here’s what writing better AI prompts looks like in practice. Same task, two different prompts, dramatically different output quality.

The task: get help writing a cold outreach email.

❌ The lazy prompt:

“Write me a cold email to pitch my web design services.”

The output: Generic 4-paragraph email with phrases like “I hope this email finds you well,” “in today’s competitive landscape,” and “let’s hop on a quick call.” Trash. Going straight to the deleted folder.

✅ The spec prompt:

“You are a freelance web designer pitching a small e-commerce store owner who runs a $500K/year online furniture business. The store’s current site loads slowly and has a confusing checkout. Task: write a 90-word cold email that opens with a specific observation about their site (assume you visited it), states one concrete improvement worth $X in revenue, and ends with a low-pressure ask for a 15-minute call. Avoid: ‘I hope this email finds you well,’ ‘let’s hop on a call,’ generic compliments, jargon, and anything longer than 90 words.”

The output: A specific, observation-driven email that sounds like a real freelancer wrote it after actually looking at the prospect’s site. Worth sending.

Same model. Same task. Five times the work into the prompt and a hundred times the value out. That’s the entire game of how to write better AI prompts. Once you internalize the spec-prompt approach, every output you get gets visibly better.

Which AI Tools Need These Rules Most?

All of them, but you have to write better AI prompts slightly differently for each. Here’s what I’ve noticed across the major tools. For deeper tool-specific guidance, OpenAI’s prompt engineering guide is the most thorough free resource for ChatGPT specifically.

| Tool | Best Rule to Lead With | Why |

|---|---|---|

| ChatGPT (GPT-5.5) | Role + Format | Responds strongly to explicit role-setting and format specs |

| Claude (Opus 4.7) | Context Upfront | Performs best with rich front-loaded context, not drip-fed |

| Gemini (3.1 Pro) | Constraints + Format | Tends to over-explain, so constraints really help |

| Grok 4.3 | Role + Constraints | Has a default snarky voice that constraints can soften |

| Perplexity | Format + Constraints | Built for research, so format matters more than role |

If you’re picking your first paid AI tool, the prompting principles transfer cleanly. Our Claude vs ChatGPT 2026 comparison covers which one fits which use case best, and our Perplexity AI review covers the research-focused alternative.

Frequently Asked Questions

What’s the most important rule to write better AI prompts?

If you can only follow one rule, make it specifying the output format. Telling the AI exactly what shape the output should take (table, 5 bullets, 100-word paragraph, etc.) is the single change that turns vague AI responses into usable output. Once you do this consistently, you’ll skip the constant copy-paste-reformat work most people waste hours on.

Do I need to learn prompt engineering to use AI well?

No. The phrase “prompt engineering” sounds technical, but the actual skill is just writing clearer instructions. If you can brief a freelancer or write an email to a coworker, you can write better AI prompts. The 7 rules in this guide are the entire skill in plain English.

Are long prompts always better than short ones?

No. The 2026 best practice shifted from “longer prompts” to “clearer specs.” A 50-word prompt with a clear role, context, and format almost always beats a 500-word prompt that rambles. Length without structure is noise. Structure with the right context is what works.

Should I use the same prompts for ChatGPT and Claude?

Mostly yes, but with small tweaks. Claude responds best when you front-load all context in one message. ChatGPT handles drip-fed context fine but really wants explicit role assignments. Gemini tends to over-explain unless you add constraints. The 7 rules work across all of them with minor adjustments.

How long does it take to get good at writing AI prompts?

Most people see a real difference within a week of consciously applying the 7 rules. Within a month of saving and reusing prompts that work, you’ll have a personal library that compounds your speed. The skill plateau hits around 3-6 months when you’ve internalized the patterns and stop having to think about them.

Are AI prompt courses worth paying for?

Almost never. Free guides like this one (and Anthropic’s, OpenAI’s, and Google’s official docs) cover everything paid courses teach. The skill comes from practicing, not from buying more courses. Save your money and spend it on actually using the tools instead.

Final Thoughts: Why Prompt Skills Matter More in 2026

The gap between someone who can write better AI prompts and someone who can’t isn’t a small productivity gain anymore. It’s the difference between AI saving you 30 minutes a week and AI saving you 10 hours a week. As models get more capable, the people who know how to brief them well pull further ahead.

What I’ve learned from teaching this skill: the 7 rules above are 90% of what it takes to write better AI prompts. The remaining 10% is just practice. Start with one rule (specifying the output format) and apply it to every prompt for a week. Add the next rule the following week. Within two months, you’ll be writing prompts that consistently get usable output instead of generic AI sludge.

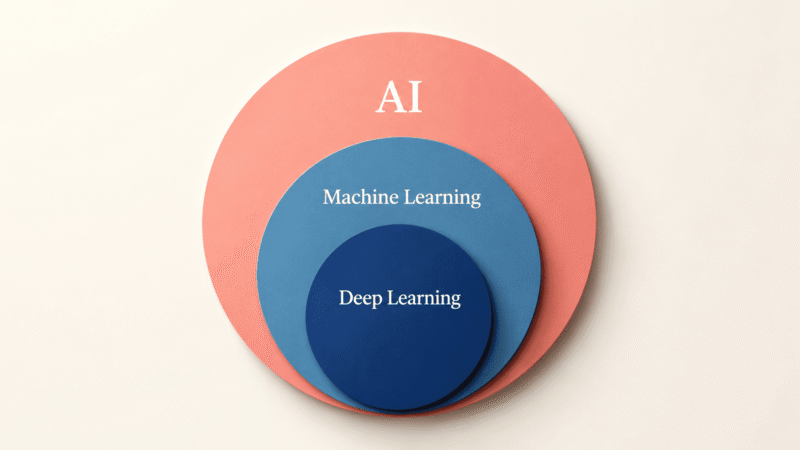

If you’re still building out your AI workflow, our guide to the best free AI tools in 2026 covers the most useful free options to practice these prompt rules on. And if you want the foundational picture of what AI actually is, our explainer on AI vs machine learning vs deep learning is a solid next read.