Anthropic just dropped Claude Opus 4.7, and the early numbers are turning heads. It beats GPT-5.5 on coding benchmarks, costs less per output token, and ships with 3x sharper vision and a 1 million token context window. So is it actually the best AI model right now, or is the hype overblown? In this Claude Opus 4.7 review, I’ll break down what’s genuinely better, where it falls short, how it compares to GPT-5.5 and the older Opus 4.6, and whether it’s worth switching to today.

What Is Claude Opus 4.7?

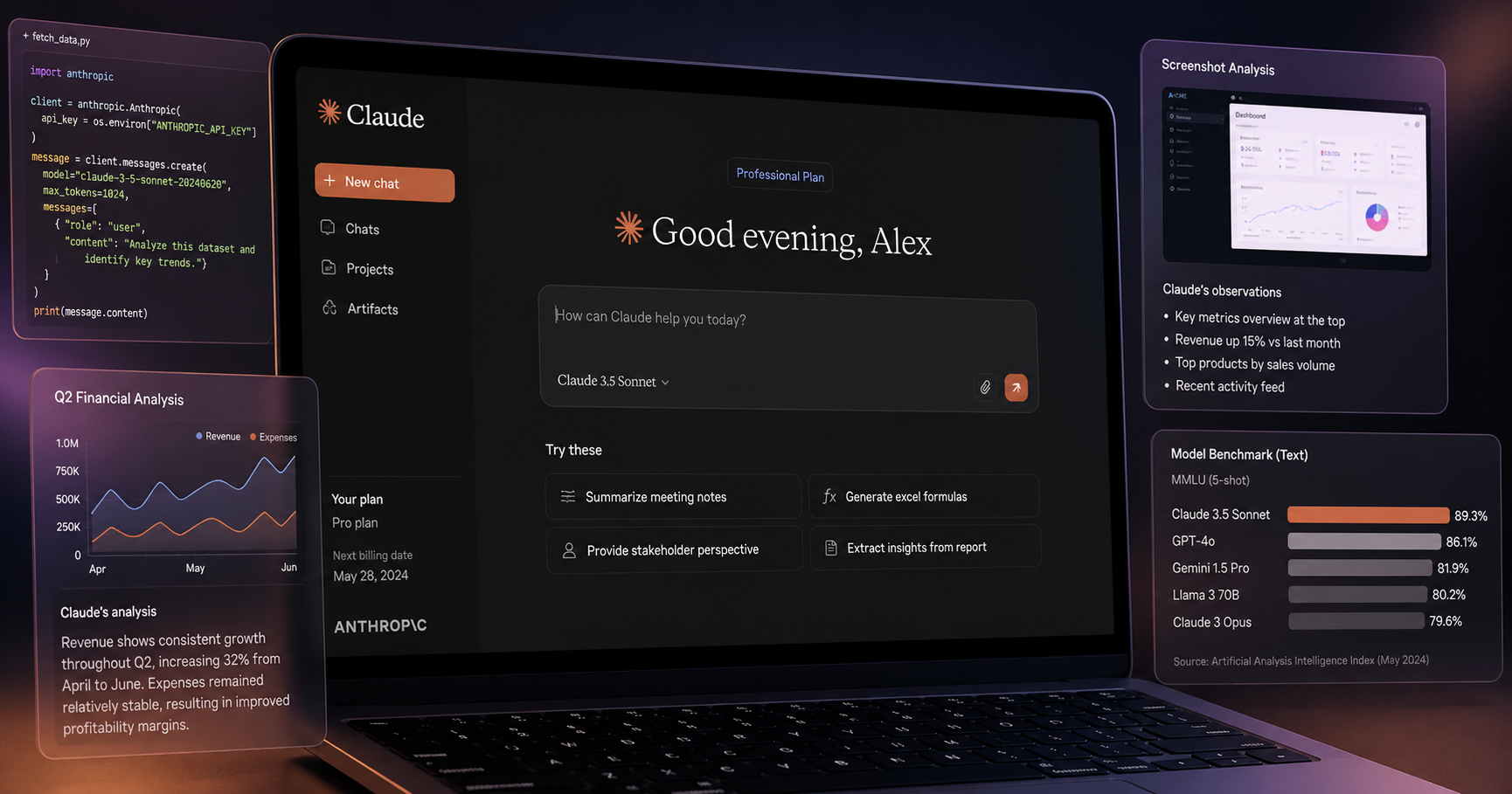

Claude Opus 4.7 is the latest flagship AI model from Anthropic, released on April 16, 2026. It’s their most capable publicly available model, sitting at the top of the Claude lineup above Sonnet and Haiku. The Opus series is built for the hardest tasks: complex coding, multi-step reasoning, agentic workflows, and now, high-resolution vision work.

What makes 4.7 different from a normal version bump is how much got rebuilt under the hood. New tokenizer, new reasoning system, new vision pipeline, and a meaningful jump in coding benchmarks. Pricing stays exactly the same as Opus 4.6, so you get the upgrade without paying more per token.

If you’re new to AI in general and want to understand how these models work first, our beginner’s guide to artificial intelligence covers the basics in plain English.

Claude Opus 4.7 vs Opus 4.6: What Actually Changed?

The jump from 4.6 to 4.7 is bigger than the version number suggests. Here’s what’s actually different.

| Feature | Opus 4.6 | Opus 4.7 |

|---|---|---|

| SWE-bench Verified | 80.8% | 87.6% |

| SWE-bench Pro | 53.4% | 64.3% |

| Max Image Resolution | 1,568px (1.15MP) | 2,576px (3.75MP) |

| LLM calls per task | 16.3 average | 7.1 average |

| Pricing | $5 / $25 per 1M tokens | $5 / $25 per 1M tokens |

| Tone | Warmer, more emojis | Direct, more opinionated |

The headline number: SWE-bench Pro jumped 10.9 percentage points (53.4% to 64.3%) in a single version. That’s one of the biggest single-version coding improvements Anthropic has ever shipped. Even more impressive, Opus 4.7 averages just 7.1 LLM calls per task compared to 16.3 for Opus 4.6, more than a 2x reduction. The model gets to the answer faster and uses fewer resources to do it.

Claude Opus 4.7 Key Features

Here’s what’s actually new and worth knowing about.

1 Million Token Context Window

Opus 4.7 supports up to 1 million tokens of context with 128k maximum output. That’s enough to feed in an entire novel, a full codebase, or hundreds of research papers in one go. For long-document analysis, this matches what GPT-5.5 and DeepSeek V4 offer.

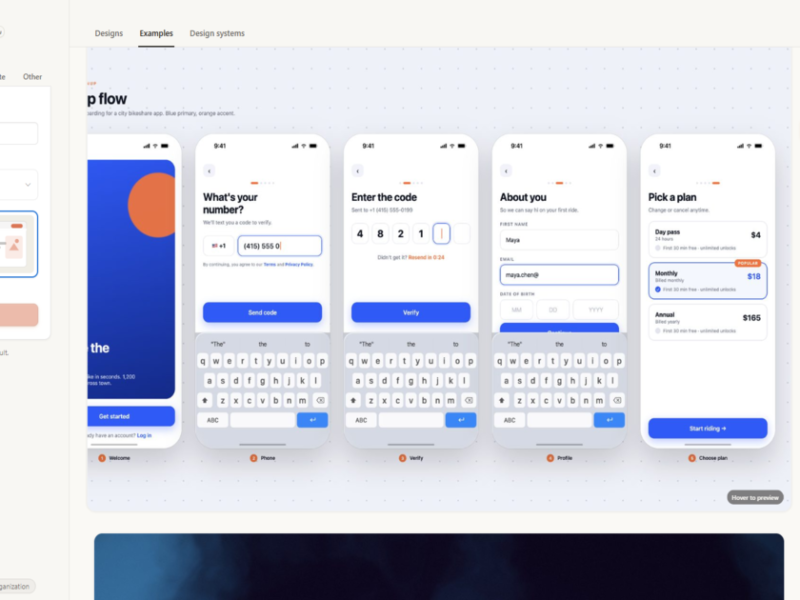

High-Resolution Vision

This is the most underrated upgrade. Image resolution went from 1,568 pixels (1.15 megapixels) to 2,576 pixels (3.75 megapixels). That’s 3x more detail. One early-access partner testing computer vision saw visual acuity jump from 54.5% on Opus 4.6 to 98.5% on 4.7. If you analyse screenshots, design files, scanned documents, or dense charts, this changes what’s possible.

Adaptive Thinking and the New xhigh Effort Level

Opus 4.7 removes the old extended thinking budgets entirely. Adaptive thinking is the only thinking-on mode now, and it automatically adjusts how deep the reasoning goes based on task complexity. There’s also a new xhigh effort level that sits between the old high and max settings, giving you most of max’s reasoning power at noticeably lower latency.

Task Budgets

For developers building agents, task budgets let you set a hard token ceiling on a long-running agentic loop. The model sees a running countdown and prioritises work to finish gracefully when the budget runs out, instead of cutting off mid-task. This is one of those small features that quietly fixes a real production headache.

Claude Opus 4.7 vs GPT-5.5: How Do They Compare?

Both models launched within a week of each other (Opus 4.7 on April 16, GPT-5.5 on April 23) so the comparison is unavoidable. Here’s how they actually stack up.

| Benchmark | Claude Opus 4.7 | GPT-5.5 | Winner |

|---|---|---|---|

| SWE-bench Pro | 64.3% | 58.6% | Opus 4.7 |

| SWE-bench Verified | 87.6% | ~80% | Opus 4.7 |

| MCP-Atlas (multi-tool) | 77.3% | — | Opus 4.7 |

| Terminal-Bench 2.0 | 69.4% | 82.7% | GPT-5.5 |

| OSWorld (computer use) | ~72% | 78.7% | GPT-5.5 |

| Output Pricing | $25 / 1M tokens | $30 / 1M tokens | Opus 4.7 |

| Token efficiency | More verbose | 72% fewer tokens | GPT-5.5 |

The honest summary: Opus 4.7 wins on raw coding ability and multi-tool agent work. GPT-5.5 wins on terminal use, computer control, and token efficiency. They’re not actually competing on the same axis. Opus 4.7 is built for reliability-critical, long-horizon coding work. GPT-5.5 is built for speed and broader agentic tasks. For a deeper look at how OpenAI’s models have evolved, our GPT-5 vs GPT-4 complete comparison covers the full GPT timeline.

Claude Opus 4.7 Pricing

Here’s the pricing breakdown for the API and chat plans.

| Plan | Cost | Best For |

|---|---|---|

| Claude.ai Free | $0 | Trying Opus 4.7 with daily message limits |

| Claude Pro | $20/month | Regular personal use, more daily messages |

| Claude Max | $100+/month | Power users, much higher message caps |

| API (Input) | $5 per 1M tokens | Developers |

| API (Output) | $25 per 1M tokens | Developers |

Pricing is identical to Opus 4.6. That’s actually a big deal because most version upgrades come with a price hike (GPT-5.5 doubled OpenAI’s prices, for example). Anthropic kept the cost flat while delivering meaningful performance gains.

How to Use Claude Opus 4.7 (Free and Paid Options)

Getting started is straightforward. Here’s the easiest path depending on how you want to use it.

- Free chat use. Go to claude.ai, sign up with your email, and start chatting. The free plan includes Opus 4.7 access with a small daily message limit. After you hit it, the model auto-switches to Sonnet for the rest of the day.

- Claude Pro ($20/month). Same as ChatGPT Plus. Higher daily limits, priority access, and unlimited use of Sonnet.

- API access for developers. Sign up at the Anthropic API console, get a key, and start building. New accounts get a small trial credit.

- Free credits via Google Cloud. Sign up for Google Cloud Vertex AI and you get a $300 credit that works on Opus 4.7 for 90 days. This is the biggest free chunk available right now.

- OpenRouter. A third-party gateway that aggregates AI models. Sometimes offers free quota on Claude models within a week of launch.

For a wider look at what’s free in the AI world right now, our roundup of the best free AI tools in 2026 covers the full landscape.

Honest Pros and Cons of Claude Opus 4.7

Most reviews online are pure cheerleading. Here’s a balanced take based on real-world feedback from developers who’ve been using it for two weeks.

What’s Genuinely Better

- Coding accuracy is in another league. 87.6% on SWE-bench Verified beats GPT-5.5 by a real margin. For complex multi-file code work, this is the model to use

- Vision capability is dramatically improved. The 3.75 megapixel cap unlocks screenshot analysis, design review, and document workflows that simply did not work before

- Pricing stayed flat. Same cost as Opus 4.6 for meaningfully better performance

- Fewer LLM calls. 7.1 average vs 16.3 on Opus 4.6 means tasks finish faster and use less compute overall

Where It Falls Short

- Web research got worse. Source attribution accuracy dropped, and citation quality is lower than Opus 4.6. If you use Claude mostly for research, you may want to stick with the older model

- Creative writing feels mechanical. Long-form prose has become more bullet-pointed and less flowing. The warmer Opus 4.6 voice is gone, replaced by a more direct, opinionated tone

- Higher token usage. The new tokenizer counts roughly 12 to 18% more tokens for the same input. So even with same per-token pricing, your bills can run slightly higher

- Slight latency bump. Opus 4.7 takes a touch longer to start responding than 4.6 on standard tasks

- API breaking changes. Extended thinking budgets are gone. If you had code depending on those, you’ll need to migrate

Frequently Asked Questions About Claude Opus 4.7

Is Claude Opus 4.7 free to use?

Yes, with limits. You can use Opus 4.7 for free at claude.ai with a small daily message cap. After that, the chat auto-switches to Claude Sonnet. For unlimited Opus access, you need Claude Pro at $20 per month.

Is Claude Opus 4.7 better than GPT-5.5?

For coding, yes. Opus 4.7 scores 64.3% on SWE-bench Pro versus GPT-5.5’s 58.6%, and beats it on most coding benchmarks. For terminal work, computer use, and broader agentic tasks, GPT-5.5 still wins. They’re built for slightly different use cases.

Should I upgrade from Claude Opus 4.6 to 4.7?

If you mainly do coding, vision work, or agentic tasks, yes. The improvements are real and the price hasn’t changed. If you mainly use Claude for web research or creative writing, Opus 4.6 may actually still be the better choice because of the regression in those areas.

What is the context window of Claude Opus 4.7?

1 million tokens of input with up to 128,000 tokens of output. That’s enough to feed in an entire codebase or hundreds of pages of documents in a single conversation.

Where can I access Claude Opus 4.7?

Through claude.ai (web and mobile), the Anthropic API, GitHub Copilot, Amazon Bedrock, Google Cloud Vertex AI, and Microsoft Foundry. Most users will start with claude.ai for the easiest experience.

Final Thoughts

The honest verdict from this Claude Opus 4.7 review: it’s the best coding model available right now, with the strongest vision capability of any major AI model and the same price as the version it replaces. For developers, anyone working with screenshots and design files, and people who want a model that finishes complex tasks reliably, it’s an obvious upgrade.

For the bigger picture on how Claude stacks up against the other giant in the room, see our complete Claude vs ChatGPT 2026 comparison.

If you want to see how Anthropic’s newest model stacks up against the wider AI landscape, check our roundup of the best free AI tools in 2026 for more options across every category.