If you follow AI news, you’ve probably heard the buzz. DeepSeek just dropped its latest model, and the early verdict on this DeepSeek V4 review is hard to ignore: a model that nearly matches Claude and GPT-5 on benchmarks, costs up to 7 times less per token, and is completely free to use in the browser right now. But is it actually worth switching to? By the end of this post, you’ll know exactly what DeepSeek V4 is, how it compares to ChatGPT and Claude, and who should be using it today.

What Is DeepSeek V4?

DeepSeek V4 is the latest flagship model from DeepSeek, a Chinese AI research lab that first shocked the tech world in early 2025 when its V3 model matched GPT-4 performance at a fraction of the cost. V4 launched on April 24, 2026, and builds on the same approach: push benchmark performance as close to the frontier as possible while keeping the price far below what OpenAI and Anthropic charge.

The model comes in two versions. V4-Pro is the full flagship, built for the hardest tasks. V4-Flash is a smaller, faster, cheaper version designed for high-volume work where speed matters more than squeezing out the last percentage of accuracy. Both models are open-weight under the MIT licence, which means anyone can download the weights from Hugging Face and run them locally.

If you’re new to AI models and want to understand the basics before diving into comparisons, our beginner’s guide to artificial intelligence is a good place to start.

The Architecture That Makes It Cheap

Both V4 models use something called Mixture-of-Experts (MoE) architecture. The idea is straightforward: instead of activating the entire model for every single request, the model routes each input to a relevant subset of its parameters. Only the specialists needed for that task wake up.

V4-Pro has 1.6 trillion total parameters but activates only about 49 billion per token. V4-Flash has 284 billion total parameters with 13 billion active per token. This is why the models are so much cheaper to run than their raw size suggests. You get the intelligence of a massive model without paying for all of it every time you send a message.

Both versions support a 1 million token context window. That’s enough to feed in an entire novel, a large codebase, or dozens of research papers in a single conversation.

DeepSeek V4 Pro vs Flash: What’s the Actual Difference?

The first question most people have: do you actually need V4-Pro, or is Flash good enough?

On the main coding benchmark (SWE-bench Verified), V4-Flash scores 79.0% and V4-Pro scores 80.6%. That’s a 1.6 percentage point gap. On LiveCodeBench, it’s 91.6% vs 93.5%. For the vast majority of everyday tasks, writing, research, and standard coding work, that gap is invisible in practice.

| Feature | V4-Flash | V4-Pro |

|---|---|---|

| Total Parameters | 284 billion | 1.6 trillion |

| Active Per Token | 13 billion | 49 billion |

| Context Window | 1 million tokens | 1 million tokens |

| SWE-bench Score | 79.0% | 80.6% |

| LiveCodeBench | 91.6% | 93.5% |

| API Output Cost | $0.28 per million tokens | $1.74 per million tokens |

| Best For | Speed, high volume, cost savings | Complex reasoning, hard code |

| Open Source | Yes (MIT) | Yes (MIT) |

If you’re using the free web chat, you get V4-Pro by default. If you’re a developer paying per API token, V4-Flash delivers nearly identical results at roughly 6x lower cost. For most use cases, Flash is the smarter pick.

DeepSeek V4 Review: Benchmarks vs ChatGPT and Claude

This is where the DeepSeek V4 review gets genuinely interesting. DeepSeek has been positioning V4 as a near-frontier model, so let’s look at what the numbers actually say.

| Benchmark | DeepSeek V4-Pro | GPT-5.4 | Claude Opus 4.6 | Gemini 3.1 Pro |

|---|---|---|---|---|

| SWE-bench Verified | 80.6% | ~75% | 80.8% | ~74% |

| LiveCodeBench | 93.5% | — | 88.8% | — |

| Terminal-Bench 2.0 | 67.9% | — | 65.4% | — |

| GPQA Diamond | — | 92.8% | — | 94.3% |

| Codeforces Rating | 3,206 | Lower | — | — |

| Open Source | Yes | No | No | No |

| API Output Cost | $1.74/M tokens | ~$10/M | ~$15/M | ~$7/M |

On coding, V4-Pro is genuinely competitive. It scores 80.6% on SWE-bench Verified, just 0.2 percentage points behind Claude Opus 4.6 (and you can see how Claude Opus 4.7 stacks up in our full review). It actually beats Claude on LiveCodeBench (93.5% vs 88.8%) and Terminal-Bench 2.0 (67.9% vs 65.4%). For a model that costs roughly 9x less than Claude to run via API, that’s a strong result.

But the benchmarks don’t tell the whole story. Early real-world testers have flagged that V4-Pro sometimes struggles with tasks that need nuanced multi-step reasoning or precise factual recall. On a structured 38-task benchmark covering coding, reasoning, and research, V4-Pro timed out on about 24% of the hardest problems. So the gap between its headline benchmark scores and its real-world performance on the most difficult tasks is real.

Where ChatGPT and Claude still lead: creative and long-form writing, multi-tool integrations, and production reliability (we cover the full Claude vs ChatGPT head-to-head in a separate post). GPT-5.4 and the newer GPT-5.5 win on computer use tasks. Claude still produces the best output for nuanced writing. These are meaningful differences depending on what you actually need the model for. For a full breakdown of how GPT has evolved, our GPT-5 vs GPT-4 complete comparison covers exactly what changed between the two generations.

DeepSeek V4 Pricing: How Cheap Is It?

The price gap between DeepSeek and its competitors is the most striking part of this story.

| Model | Input (per million tokens) | Output (per million tokens) |

|---|---|---|

| DeepSeek V4-Flash | ~$0.02 | $0.28 |

| DeepSeek V4-Pro | $0.145 | $1.74 |

| Gemini 3.1 Pro | ~$1.25 | ~$7 |

| GPT-5.5 | ~$2 | ~$10 |

| Claude Opus 4.7 | ~$3 | ~$15 |

V4-Pro costs $1.74 per million output tokens. Claude Opus 4.7 costs around $15. That’s not a small rounding error. For developers running large volumes of API calls, or anyone building an AI product, this difference genuinely changes what’s financially viable. And for regular users who just want to chat? It’s completely free in the browser.

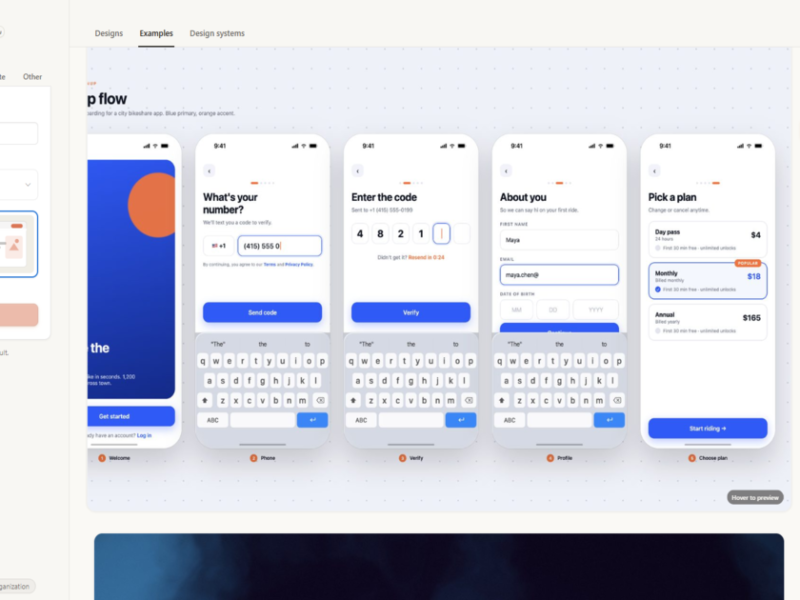

How to Use DeepSeek V4 for Free

Getting started takes about two minutes.

- Go to deepseek.com and open the chat

- Sign in with your email address, Google account, or WeChat

- Confirm the active model at the top of the chat shows V4-Pro

- Start typing. No credit card needed

The free tier includes the full 1 million token context window, file uploads (PDFs, images, and code), and live web search. At the top of the text box, you’ll see three reasoning modes: Non-Think (fast, standard responses), Think High (deeper reasoning for harder problems), and Think Max (maximum effort for the most complex tasks). For most requests, Non-Think is fine. Switch to Think High when you’re working through a tricky problem.

DeepSeek doesn’t publish a hard daily message limit. The free tier slows down under heavy load during peak hours, but it rarely blocks you outright.

Running It Locally

Because both models are MIT-licensed and open-weight, you can download them from Hugging Face and run them on your own hardware. V4-Pro requires very significant compute to run at full precision. V4-Flash is far more practical for local deployment on a high-end GPU setup. For most people, the free web chat is the easier path.

Who Should Actually Use DeepSeek V4?

Here’s an honest take on who this model works well for and where it falls short.

Good fit if you:

- Write code regularly and want a model that competes with Claude on coding benchmarks at a fraction of the API cost

- Need to process very long documents and a 1 million token context window is important to you

- Are currently paying for a ChatGPT Plus or Claude Pro subscription and mostly use it for coding or research tasks the free tier is worth trying first

- Are building AI agents or AI products and API costs are a real concern. Check out our guide on making money with AI in 2026 for more on how developers are building with these tools

Might not be the right pick if you:

- Need high-quality creative or long-form writing. Claude still produces noticeably better output for nuanced writing tasks

- Rely on image generation. DeepSeek V4 doesn’t generate images, and image uploads aren’t reliably working yet in the early release

- Want the best Google Workspace integration. Gemini is the clear winner there

- Are handling sensitive business or personal data. DeepSeek is a Chinese company with servers in China. For non-sensitive personal use it’s fine, but check their privacy policy before putting anything confidential in

- Need a battle-tested production API. V4 launched four days ago. The infrastructure hasn’t been stress-tested at scale the way GPT and Claude’s have over years of production use

Frequently Asked Questions About DeepSeek V4

Is DeepSeek V4 free to use?

Yes. You can use DeepSeek V4-Pro for free at deepseek.com with no credit card required. The free tier includes the full 1 million token context window, file uploads, and web search. Paid API access is available for developers at much lower rates than GPT or Claude.

Is DeepSeek V4 better than ChatGPT?

On coding benchmarks, yes. V4-Pro scores 80.6% on SWE-bench Verified, which is ahead of GPT-5.4 and essentially tied with Claude Opus 4.6. For creative writing, image generation, and multi-tool integrations, ChatGPT still leads. It depends on what you’re using it for.

What’s the difference between V4-Pro and V4-Flash?

V4-Pro is the flagship model (1.6 trillion parameters, 49 billion active) and handles the hardest reasoning and coding tasks better. V4-Flash is smaller (284 billion total, 13 billion active), faster, and costs about 6x less via the API. The benchmark gap between them is only about 1.6% on coding tasks, so for most day-to-day use, Flash is the better value.

Is DeepSeek V4 safe to use?

For general personal tasks, yes. But DeepSeek is a Chinese company and its servers are based in China. If you’re handling sensitive business data, client information, or anything regulated, review their privacy policy carefully and consider whether the self-hosted option makes more sense for your situation.

Can I run DeepSeek V4 locally on my own computer?

Yes, both models are open-weight and available on Hugging Face under the MIT licence. V4-Pro requires significant hardware to run locally — it’s a 1.6 trillion parameter model. V4-Flash is far more accessible and can run on a high-end consumer GPU setup. For most people, the free web chat is the simpler option.

Final Thoughts

That’s the full picture from this DeepSeek V4 review. The headline is accurate: a model that nearly matches the frontier on coding benchmarks, runs for free in the browser, and costs a fraction of Claude or GPT via API. It’s not the right tool for everything. Creative writing, image tasks, and anything that needs the most reliable production infrastructure still belong to ChatGPT and Claude.

But for coding, research, long-document analysis, and anyone building cost-sensitive AI products? DeepSeek V4 deserves a serious look. And with the model just four days old, it’s only going to improve from here.

Want to see how it stacks up against the broader field? Our roundup of the best free AI tools in 2026 covers all the top options across every category.